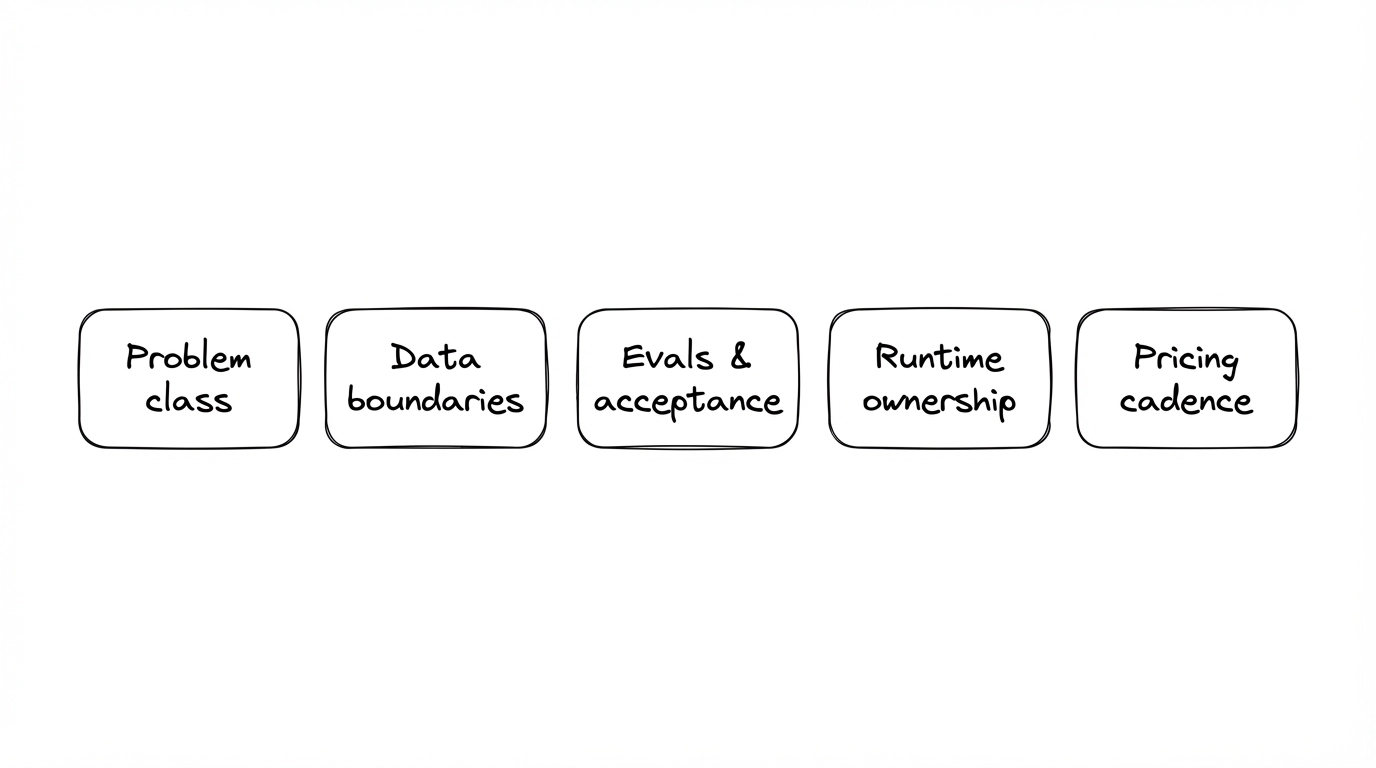

Five Alignments Before Comparing AI Software Development Solutions Proposals

1. Every deck looks full—what actually differs?

When you search for AI software development solutions, pages promise end-to-end stacks; in RFPs, five proposals all list models, integrations, launch, and ops. What moves cost and risk is rarely a buzzword—it’s whether you align the five areas below before you compare quotes; otherwise, you pay hidden engineering tax in meetings and rework. This is a decision framework, not a vendor scorecard.

2. Alignment 1: What class of AI problem are you solving?

Copilot-style assistance, internal workflow automation, customer-facing chat—different problem classes need different architectures and habits. If this is fuzzy, the “stages” in AI Project Tool Matrix: Requirements, Design, and Development won’t land. Write the user task chain first, then models and APIs—the same family as “requirements not locked” in 5 Pitfalls When Using AI for Projects.

3. Alignment 2: where data comes from, where it may go, who owns risk

Decks often say “private deployment / compliance” in one line; you need alignment on whether tuning uses your data, where prompts and logs live, which systems answers traverse. Without boundaries, there is no operational security and handoff story—skip legal treatises, but procurement and engineering should share one data-flow sketch.

4. Alignment 3: what “better” means—evals and acceptance

Without evaluation criteria, “go-live” is just demo-ready. 5 Early Warning Signs of Low-Quality Delivery applies strongly to AI programs. Minimum: pick a few representative tasks, agree a baseline and regression path (even coarse), and put it in an attachment—more useful than “use the latest model.”

5. Alignment 4: who owns prompts after launch, who carries incidents, who updates knowledge

Delivery isn’t launch day. If nobody internally owns prompts and knowledge updates, even a strong vendor becomes permanent ops outsource. Using AI for Projects: Build In-House vs Outsource vs Pro Team is the macro picture; here, force a runtime RACI. For crisp boundaries, Why Flat Monthly Fee Engineering Fits Growing Teams gives shared language for who is tier-1 vs tier-2.

6. Alignment 5: pricing cadence vs your cash flow and roadmap

Milestone, monthly, or time-and-materials—no holy grail, only fit to cash flow and iteration. Hire Software Developers or Buy Predictable Delivery—How to Choose frames hiring vs buying capacity; Before You Choose an App Development Company: Four Questions to Filter High-Risk Vendors front-loads change and ongoing cost—AI programs need the same. If tools and POs are in play, read After AI Dev Tools Hit the PO: Four Questions for Procurement and Engineering alongside this section: tool budget and solution delivery must share one roadmap vocabulary.

7. Wrap-up

The longest module list doesn’t win AI software development solutions. Align problem class, data, evaluation, runtime ownership, pricing cadence—then compare quotes. Book a Discovery Call to lay your decks side by side, or see plans and pricing for flat-fee / sprint boundaries.

Fig 1: Five alignments—then compare “full stack” lists.

Fig 1: Five alignments—then compare “full stack” lists.

In short: When you search AI software development solutions, align these five before you debate price.

Want to run projects with AI and skip the trial-and-error? Uranus Lab wires multiple tools along requirements → docs → development → retro, with people and AI working together for smooth, fast delivery. Learn more or book a discovery call / get a free quote.

Ready to Build?

Stop building AI wrappers. Build defensible IP with a dedicated engineering pod.