Shared Model Service: Three Interfaces Where Cost and Ownership Get Messy

1. Once the model becomes shared capability, the problem stops being only technical

In a single project, many model issues can still be handled ad hoc. Once the capability becomes a shared service used across teams, products, and workflows, the first thing that usually breaks is not output quality. It is three organizational interfaces: who pays for usage, who owns bad outcomes, and who approves change.

The common version looks like this: a customer support assistant, an operations review flow, and an internal knowledge assistant all sit on the same model layer. Before launch, everyone calls that reuse. A few weeks later, finance sees one large bill, the platform team sees aggregate usage, and each business team only sees its own conversions or tickets. Nobody can put cost, ownership, and change on the same table. If those three interfaces stay fuzzy, success creates more friction rather than less.

2. Interface 1: whose usage is it, and where does budget actually land

Platform teams often say, “we only provide the capability; usage belongs to the business.” Business teams often say, “the entry point is not ours, so the full bill cannot be ours either.” The real issue is that many organizations never define whether spend is tracked by product line, team, tenant, business action, or platform-level shared cost.

Without that definition, three kinds of mess appear fast:

- the same usage is treated as infrastructure cost by one team and experiment cost by another

- when spend spikes, teams argue over budget ownership before deciding whether the usage is worth keeping

- month-end reviews produce multiple local explanations but no coherent explanation of the total bill

What usually needs fixing is not a slogan like “central billing,” but three basic moves:

- every call should carry traceable tags such as team, product line, scenario, or tenant ID

- you should decide whether you run

showbackorchargeback, meaning whether teams first see cost or directly absorb it - there should be a separate shared-cost bucket for evaluation, gradual rollout, and common caching rather than forcing rough allocation everywhere

Shared capability can absolutely use a common pool. But first you need words for it: platform tax, business tax, experiment tax. If you do not separate those, the organization ends up paying through politics instead of accounting.

3. Interface 2: when results go wrong, who carries it first

Once a model service is shared, errors no longer stay at the “model answered badly” level. They move into complaints, bad recommendations, wrong summaries, and broken downstream actions. That is when the usual sentence appears: the platform says we only provide the base layer; the business says we are using your capability.

The real problem is not the blame line itself. It is that many organizations never defined:

- which failures count as platform quality failures

- which count as business rule failures

- who stops the bleeding once users are already affected

- who changes prompts, rules, interfaces, or usage patterns after the incident

At a minimum, this needs three layers:

- platform layer: routing, rate limiting, base availability, shared prompts, common safety guardrails

- business layer: task rules, field mapping, trigger conditions, and whether results flow into downstream actions

- response layer: who can pause the feature first, who briefs operations or support, and who owns the incident write-up

If this interface is vague, the platform becomes the permanent sink for every messy case, and business teams become more afraid to use it. If everything gets dumped on business teams instead, the platform never proves it is capability rather than risk. A shared service is not “joint ownership” unless SLA, first response, and postmortem owner are written in a way people can execute.

4. Interface 3: who has the right to ship prompt, policy, and model changes

The most underestimated part of a shared model service is not usage cost but change authority. Once multiple teams depend on the same layer, any “small tweak” can affect everyone.

The usual mess is not that nobody changes anything. It is that too many people can push change:

- the platform adjusts a strategy for global quality and one business line sees its metrics drop

- a business team adds one rule for its own case and other teams begin drifting

- everyone calls it a minor change, but nobody can answer who actually approved it

Once multiple teams depend on the same model layer, the minimum working setup should include:

- a

prompt / policy registryso you know what version is live - a lightweight gradual-rollout rule so not every change hits full traffic at once

- a named rollback owner so somebody can revert before the meeting ends

If this interface stays loose, the platform becomes a public area everyone can touch and nobody fully owns. The workable version is simple, not bureaucratic: who can propose, who can approve, who owns rollback.

5. Wrap-up

Once AI software development moves from one project to shared capability, the expensive part is often not model spend but allocation rules, ownership tables, and change control left undefined. Platform work does not end when the service exists. It becomes real only when every call can be allocated, every failure can be layered, and every change can be traced.

If your organization is already showing signs of “bigger bills, fuzzier ownership, and every change turning into negotiation,” book a Discovery Call to map these three interfaces. If you care more about long-term boundaries and predictable collaboration, see plans and pricing.

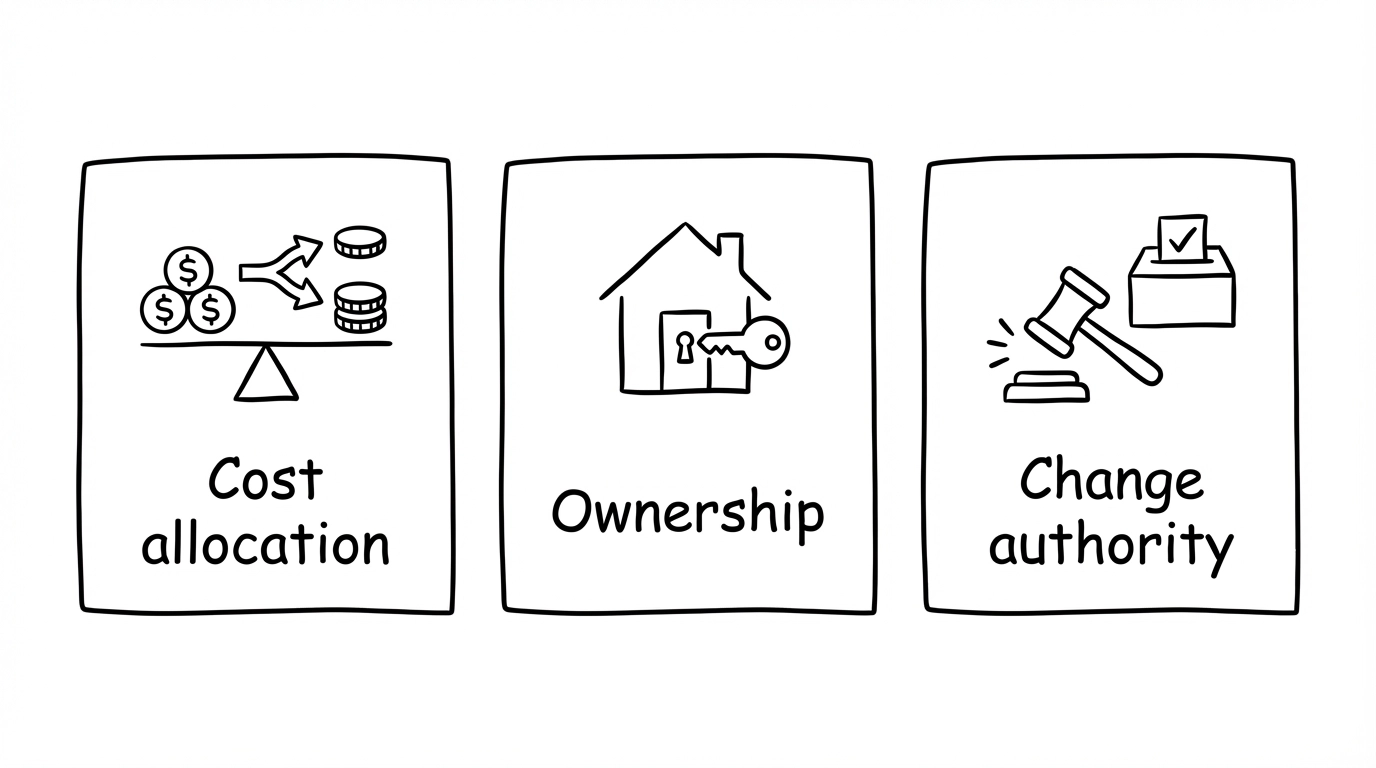

Fig 1: Allocation, ownership, and change authority are the three interfaces that make shared capability messy when left undefined.

Fig 1: Allocation, ownership, and change authority are the three interfaces that make shared capability messy when left undefined.

In short: The hard part of a shared model service is not building it. It is turning allocation, ownership, and change authority into operating rules people can actually run.

Want to run projects with AI and skip the trial-and-error? Uranus Lab wires multiple tools along requirements → docs → development → retro, with people and AI working together for smooth, fast delivery. Learn more or book a discovery call / get a free quote.

Ready to Build?

Stop building AI wrappers. Build defensible IP with a dedicated engineering pod.