AI Launch Week One: Why Teams Start Firefighting

1. Real pressure starts after launch

Many teams treat launch day as the milestone. In practice, Day 0-7 is where pressure really shows up: real user input, cross-team handoffs, on-call load, and cost curves all amplify at once. What breaks first is usually not “the model” in isolation, but a set of assumptions nobody bothered to name.

2. Assumption 1: errors will self-correct

The most dangerous thought in week one is “give it two more days.” In production, errors do not self-correct. They spread through three channels first:

- support and operations teams start patching manually

- product teams interpret the issue as “just prompt tuning”

- users form a reliability judgment before engineering names it an incident

What week one needs is not more optimism but a threshold table: which failures need containment within one hour, which within a day, and which can be logged and scheduled. Without that, “let’s monitor it” often means “let’s respond later.”

3. Assumption 2: human fallback is always available

Teams love to say “we have human fallback,” but week one usually reveals a different reality:

- the escalation path exists, but nobody watches the SLA

- support can see the complaint, but not the AI context

- engineering sees the failure mode, but not which users are already affected

So fallback exists in diagrams, not in experience. The usable version is four fields written explicitly: who takes over, in how long, with what context, and how unresolved cases escalate. Without those, fallback is just failure moved from the model layer to the human layer.

4. Assumption 3: small prompt tweaks won’t break old flows

Prompt and rule changes look tiny, but in a live feature they often change three things at once: routing logic, field extraction, and downstream actions. The most common failure is not “the model is obviously broken.” It is “an old workflow quietly drifted.”

Week one creates two dangerous illusions:

- the change is too small to justify regression

- it worked yesterday, so it should work today

The safer move is lightweight, not heavyweight: keep a fixed replay set of the five to ten most expensive, sensitive, or complaint-prone paths. Run that set before every change. Not to chase perfection, but to avoid spending a week explaining why a “small tweak” quietly broke something expensive.

5. Assumption 4: cost will naturally stabilize

Many teams wait for month-end finance review, but the riskiest AI spend signals often appear by days 3-7:

- real users do not behave like test prompts and keep asking follow-ups

- retries and manual review turn one task into multiple calls

- one “let’s open access and watch” decision can double daily usage

Without alert thresholds in week one, finance sees a bill, product sees growth, engineering sees load, and nobody sees one shared problem. Cost does not auto-stabilize. It expands along the widest path of real usage unless someone defines the line where rollout pauses.

6. Wrap-up

In Enterprise software development, week one is really an organizational test: error containment, fallback ownership, change regression, and cost thresholds all need explicit owners.

If “launched, but still patching holes” sounds familiar, book a Discovery Call to map your Day 0-7 response model; for predictable collaboration and sprint boundaries, see plans and pricing.

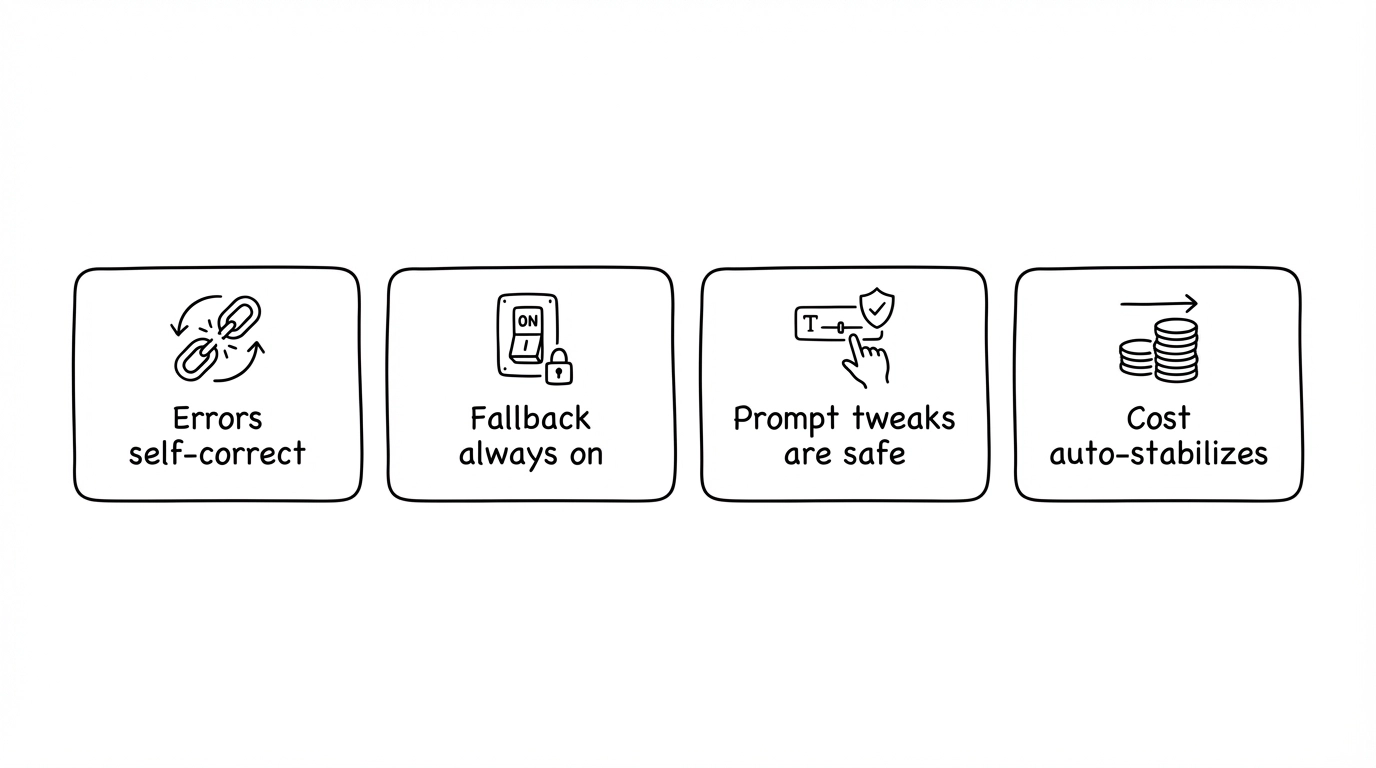

Fig 1: Four default assumptions that fail in launch week.

Fig 1: Four default assumptions that fail in launch week.

In short: Week one success has less to do with model hype than with the assumptions you remove before they fail in public.

Want to run projects with AI and skip the trial-and-error? Uranus Lab wires multiple tools along requirements → docs → development → retro, with people and AI working together for smooth, fast delivery. Learn more or book a discovery call / get a free quote.

Ready to Build?

Stop building AI wrappers. Build defensible IP with a dedicated engineering pod.